By Will Palmer | Growth Lab | growthlabseo.com

The 2026 Guide to AI SEO and Search

HOW AND WHY THIS GUIDE WAS BUILT Most marketing guides are recycled opinion. This one is different. Over the past year, I’ve followed the most trusted and authoritative voices in web marketing, SEO, AI, and digital strategy. Researchers like Lily Ray, Rand Fishkin, Amanda Natividad, Jono Alderson, Miriam Ellis, and Kevin Indig, among others. When they published data on LinkedIn or shared original research from industry peers, I saved it. All of it. I then fed over a gigabyte of 2026 AI and search reports into NotebookLM to synthesize patterns, then uploaded both the raw articles and those synthesized resources into Claude to distill everything into actionable knowledge that benefits a business owner, a marketing manager, or an agency strategist equally. What you’re reading is the intersection of data, expert consensus, and real-world pattern recognition. Not a prediction. A map. |

The Rules Have Changed

Something fundamental shifted in 2025 and accelerated hard into 2026. The playbook that built countless businesses on Google was this:

- Pick my Keywords

- Build a page

- Earn some links

- Wait to rank

Ah, if it were only that easy. For the record, Google is not dying.

This is because a new layer of intelligence has been placed on top of every search experience, and that layer plays by entirely different rules.

ChatGPT now processes 5.8 billion web visits per month. Google’s AI Mode and AI Overviews have restructured how answers are surfaced before a user ever clicks a result. Perplexity, Claude, Gemini, and a dozen other AI systems are forming opinions about your brand (right now) based on signals most businesses have never thought to manage.

The businesses winning in this environment aren’t the ones who have mastered a new AI trick. They’re the ones who finally got the fundamentals right. Marketing, at its core, has always been about science. Understanding cause and effect, signal and response, reputation and reach. What AI has done is remove the slack that allowed shortcuts to work. The gap between ‘optimized’ and ‘obviously good’ has never been wider.

This guide synthesizes the most important research, data, and expert analysis published in early 2026. It is written for business owners who need to understand what’s at stake and for marketing managers who need to act on it. Every section is grounded in verified data, not speculation.

How AI Search Works in 2026

THE CORE TRUTH OF 2026 You don’t rank for keywords anymore. You are inferred. Search engines and AI systems build models of entities: your brand, your expertise, your reputation across the web. Rankings and citations are outputs of how well those models understand and trust you. Your strategy needs to start there. |

Before you can optimize for AI search, you need to understand how it works because it is fundamentally different from traditional search engines, and most businesses are making decisions based on the wrong mental model.

ChatGPT is Not a Search Engine. Then What is It?

Rand Fishkin of SparkToro put it plainly: LLMs are ‘spicy autocomplete.’ When ChatGPT answers a question, it is not looking up a verified answer in a database. It is calculating the most statistically probable sequence of words based on everything it has been trained on and, when needed, a real-time web search.

This has a profound implication: when you ask an AI why it recommended a particular brand, the answer it gives you is itself a hallucination. The AI cannot access its own neural pathways. It doesn’t know why it chose what it chose. It generates a plausible-sounding explanation using the same probability mechanics it uses for everything else.

| CRITICAL IMPLICATION FOR MARKETERS Never ask an AI tool how it came up with an answer and treat that explanation as strategic insight. It isn’t. AI recommendations are statistical outputs — and that means ‘tracking rank #1 in ChatGPT’ is measuring noise, not signal. Rand Fishkin’s research found less than a 1% chance that two users get the exact same brand list for the same query. |

How ChatGPT Decides Which Websites to Cite in Responses

Research by David McSweeney revealed the likely architecture behind ChatGPT’s responses. It is not one model doing everything. It’s a cascade of decisions:

- A small routing model first determines whether to answer from training data or conduct a real-time web search

- If a search is needed, a separate model handles finding and filtering web results before the main model writes the response

- ChatGPT doesn’t pull whole pages — it extracts small, semantically relevant snippets and scores them by meaning, not keyword match

- Speed and processing cost matter: slow or technically complex pages may be skipped entirely, even if the content is excellent

- For complex, timely, or niche questions, real-time web context is essential — training data alone is rarely enough

The practical upshot: clarity wins. Clean structure, direct answers, and well-organized content make it easier for AI systems to extract usable snippets. A slow, JavaScript-heavy site with buried content is structurally invisible to these systems regardless of how good the content itself is.

The practical upshot: clarity wins. Clean structure, direct answers, and well-organized content make it easier for AI systems to extract usable snippets. A slow, JavaScript-heavy site with buried content is structurally invisible to these systems regardless of how good the content itself is.

The Fastest Way to Lose AI Visibility is to Lose Google Visibility First

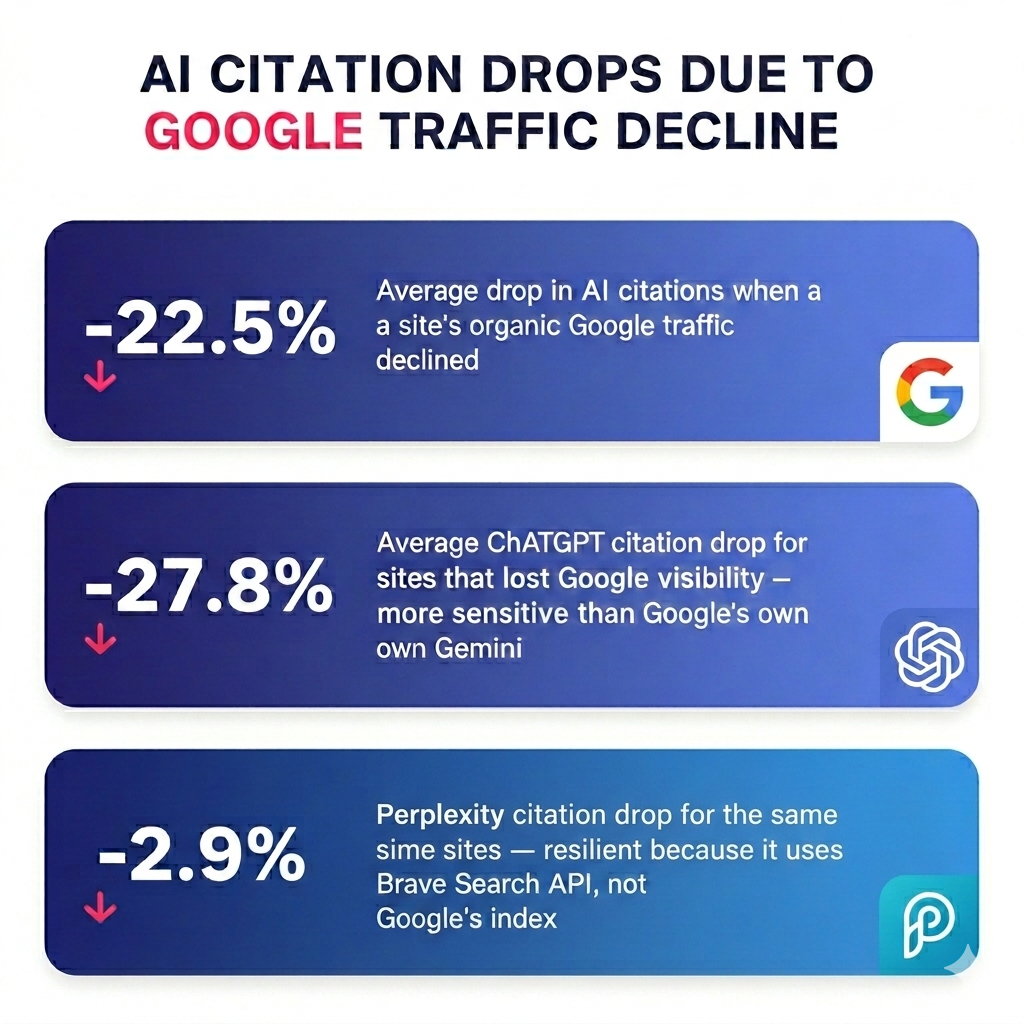

One of the most important findings of 2026 comes from Lily Ray’s analysis of 11 sites that were penalized by Google algorithm updates. She tracked their performance across Google search, ChatGPT, Google AI Mode, Gemini, and Perplexity. The results were striking:

| -22.5% | Average drop in AI citations when a site’s organic Google traffic declined |

| -27.8% | Average ChatGPT citation drop for sites that lost Google visibility — more sensitive than Google’s own Gemini |

| -2.9% | Perplexity citation drop for the same sites — resilient because it uses Brave Search API, not Google’s index |

The conclusion is clear: the fastest way to lose AI visibility is to lose Google visibility first. ChatGPT is actually more sensitive to Google ranking drops than Google’s own AI systems. If your organic foundation is cracked, your AI citation strategy is built on sand.

| THE PERPLEXITY EXCEPTION Perplexity uses Brave Search API and its own crawler, not Google’s index. This means sites penalized by Google can maintain stronger Perplexity visibility. For brands building an AI search strategy, diversifying the signals that feed Perplexity — independent backlinks, original content, non-Google citations — is increasingly important. |

Keyword Ranking in AI Does Not Exist

SparkToro and Gumshoe conducted a large-scale study asking hundreds of people to write prompts expressing the same underlying intent. The results shattered a foundational assumption of traditional SEO:

| 0.081 | Average semantic similarity score between prompts expressing the same intent — meaning people phrase the same question in dramatically different ways |

Eight people looking for youth basketball leagues produced eight completely different prompts — some focused on location, some on age, some on coaching philosophy, some used nicknames for the AI. This is not an edge case. It is how humans naturally communicate.

The good news: despite massive variation in phrasing, AI models often return similar clusters of brands across those phrasings. AI is good at recognizing underlying intent even when surface language varies wildly. But this reframes the entire optimization challenge.

The question is no longer ‘which keyword do I rank for?’ It is: ‘Am I showing up reliably across the full semantic neighborhood of this intent?’

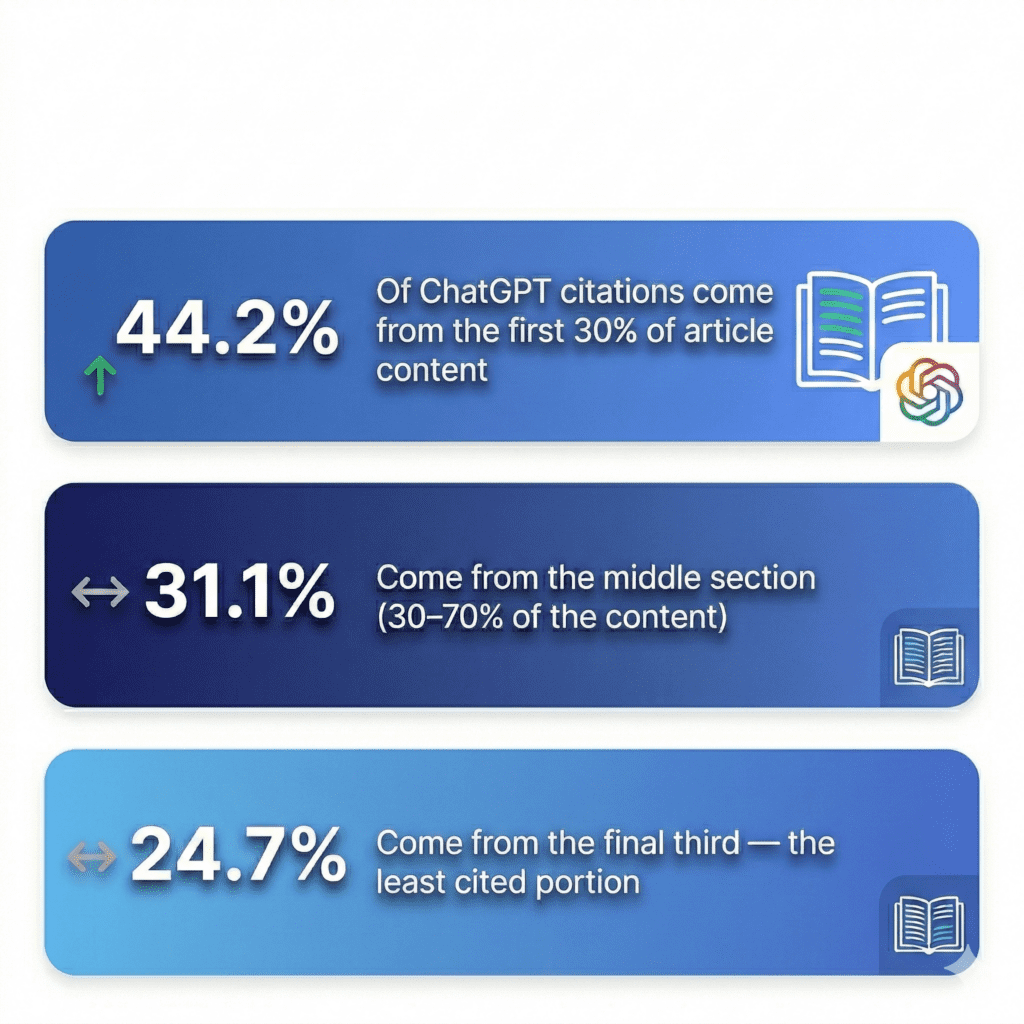

Kevin Indig’s analysis of 1.2 million AI answers and 18,012 verified citations produced some of the most actionable structural data available on what content AI systems prefer to quote. If you write or commission content, this section will change how you do it.

How Does AI Rank Your Content?

| 44.2% | Of ChatGPT citations come from the first 30% of article content |

| 31.1% | Come from the middle section (30–70% of the content) |

| 24.7% | Come from the final third — the least cited portion |

Within paragraphs, the pattern holds: 53% of citations come from the middle of paragraphs, 24.5% from opening sentences, and 22.5% from closing sentences.

The implication is not that you should bury your key ideas in paragraph middles, it’s that you should front-load your most important insights into the first third of every page, where the concentration of citations is highest.

How to Structure Content to Get Cited by AI

Content that AI systems cite most frequently shares five measurable traits:

- Definitive language: Highly cited content is 2x more likely to use declarative phrasing|

- Conversational Q&A structure: 2x more likely to include explicit questions; 78.4% of AI citations come from content organized around heading-as-question formats

- Entity richness: 20.6% proper nouns in highly cited content versus 5–8% in lower-performing content — specific brands, tools, people, and organizations signal depth and specificity

- Balanced analytical tone: A subjectivity score of 0.47 — the tone of a good analyst, neither academic nor promotional. Think briefing document, not marketing copy

- Business-grade clarity: Flesch-Kincaid grade level 16 — clear enough for a senior professional, not dumbed down, not jargon-heavy

| THE FORMAT THAT WINS Briefing-style content outperforms narrative ‘ultimate guide’ writing in AI citation rates. Structure your content like a smart analyst briefing a client: state conclusions first, use headings as questions, name specific tools and organizations, and back every claim with a clear source. The ‘wall of wisdom’ essay format gets absorbed and averaged out. The direct briefing format gets cited. |

The ‘Too Much SEO’ Penalty Is Real

One of the most significant penalties documented in 2026 targets a tactic that became popular precisely because it seemed low-risk: the self-promotional listicle.

Sites publishing large volumes of articles that ranked their own company as ‘the best’ solution in their category — sometimes thousands of such pages — were devastated by Google’s January 2026 algorithm action. One documented case: a company with over 3,000 self-promotional listicles lost 66% of its projected monthly organic traffic across multiple core updates. AI citation rates mirrored the collapse exactly.

| -49% | Visibility drop experienced by sites using self-promotional listicle tactics following January 21, 2026 algorithm action |

This matters beyond the direct penalty. When Google’s Review System guidelines are applied to listicle content — which is now how these pages are evaluated — the bar for quality requires genuine first-hand experience with every product reviewed. Very few companies publishing competitive listicles can meet that standard.

The rule: Any tactic done ‘for GEO’ that could trigger a Google spam penalty will ultimately hurt AI search performance too. The two are inseparably linked.

Local SEO: What Gets You Better Visibility in Google Maps

For businesses with physical locations or defined service areas, 2026 has introduced a series of ranking factors that didn’t meaningfully exist five years ago. The convergence of real-time data, AI-powered local search, and consumer review behavior has created both new vulnerabilities and new opportunities.

Business Hours Are Now a Ranking Signal

Business operating hours have become the fifth most influential local search ranking factor — a position that would have been unthinkable even two years ago.

The mechanism is straightforward but its implications are significant: AI systems and Google’s local ranking algorithms now evaluate whether a business is currently open when determining how prominently to feature it.

Rankings degrade in the final hour of operation. A business listed as closing at 5pm may see measurably reduced visibility starting around 4pm.

Businesses staying open while competitors have closed gain a temporary competitive advantage in local pack rankings.

For high-intent local search (the queries where someone is ready to contact a business right now) this creates an actionable opportunity: audit competitor hours, identify windows where you can maintain coverage they don’t, and consider whether a 24-hour answering service could justify the investment by preserving ranking visibility around the clock.

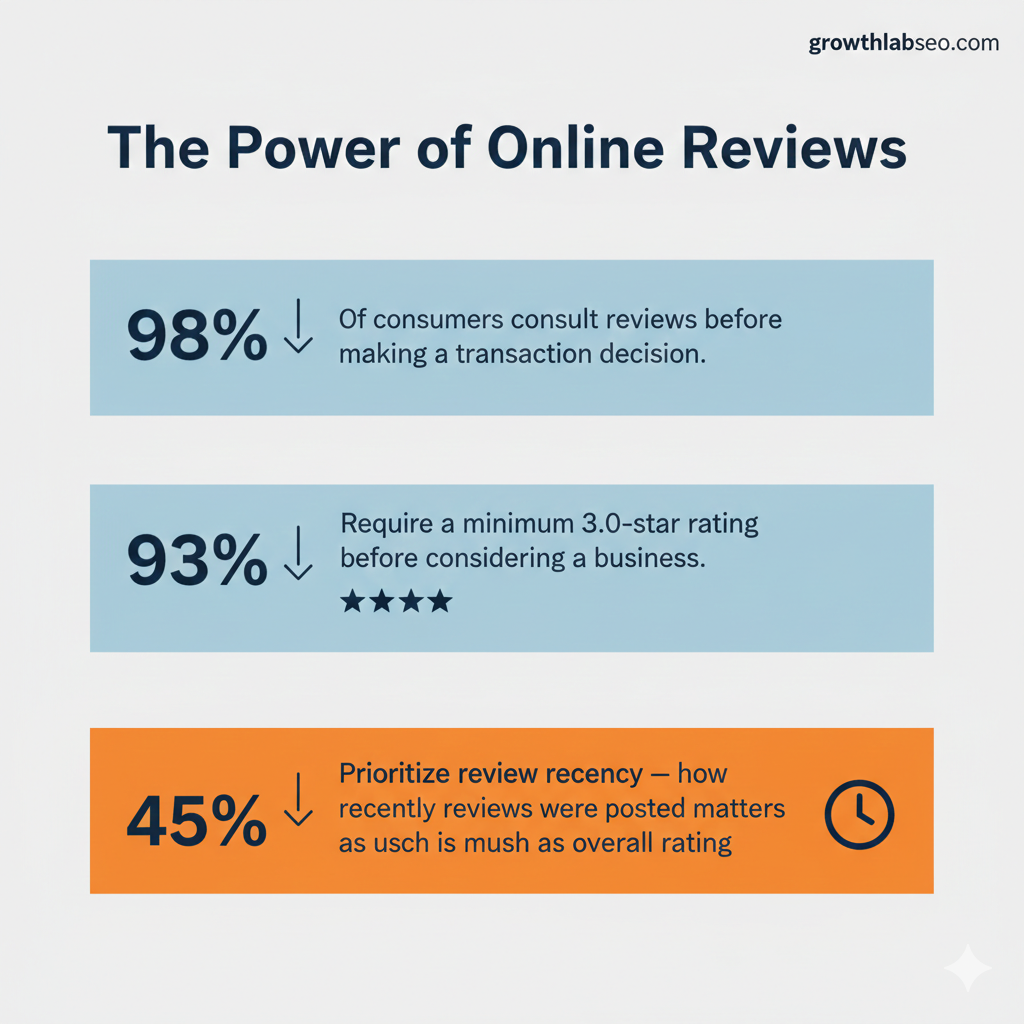

Reviews Are Now the Main Ranking Driver in Local Search

| 98% | Of consumers consult reviews before making a transaction decision |

| 93% | Require a minimum 3.0-star rating before considering a business |

| 45% | Prioritize review recency — how recently reviews were posted matters as much as overall rating |

Review recency is now the 11th most influential local ranking factor. It’s higher than many traditional signals that agencies have spent years optimizing.

A business with 200 reviews from two years ago is at a measurable disadvantage compared to a competitor with 40 reviews from the past six months.

Equally important: being featured on expert-curated ‘best of’ lists on authoritative third-party domains is now the single strongest predictor of AI citation.

Earning your way onto FindLaw (for legal), Healthgrades (for medical), or relevant industry directories isn’t just a citation strategy it’s an AI visibility strategy.

Miriam Ellis of Whitespark notes that ‘AI is bringing citations back into the digital marketing limelight’ after years of declining focus.

Your Google Business Profile Should Have an Exact Address – Not a Service Area

Service area businesses that hide their physical address in Google Business Profile may be triggering a documented bug that misplaces their map pin, sometimes by significant distances.

This ‘phantom pin’ placement detaches the ranking radius from the actual business location, suppressing visibility in the precise areas the business serves.

If you are a service area business that has hidden your address and noticed unexplained drops in local ranking radius, this is likely the cause. Audit your Business Profile settings and use Local Ranking Grids to identify pin placement discrepancies.

Ranking in Google is No Longer the Definition of Success. Here’s What Is.

Almost every SEO brief ever written has started with a sentence like ‘We want to rank for X.’

Jono Alderson, one of the most respected technical SEO consultants in the world, argues this is precisely the wrong place to start and the evidence supports him.

Why ‘Ranking for Keywords’ Is the Wrong Goal

Rankings are not goals. They are outputs. Search engines and AI systems build entity models, a composite understanding of what your brand is, what it knows, who it serves, and how much it should be trusted.

Pages are inputs into that model. Rankings are what the model produces when a relevant query arrives.

When your strategy begins with ‘how do we rank for X,’ you are trying to manipulate an output without changing the system’s understanding of the underlying entity.

That’s why keyword-based SEO often feels unpredictable — tweak a title, add some copy, acquire a link, sometimes rankings move, often they don’t, rarely does it stick.

The standard playbook of building keyword-matched landing pages filled with ‘broadly applicable advice’ is systematically failing.

AI systems are extremely efficient at collapsing competent summaries of widely available information into a single canonical answer. If your page is a marginally better version of ten similar pages, you are building raw material for someone else’s AI synthesis rather than a durable asset for your own visibility.

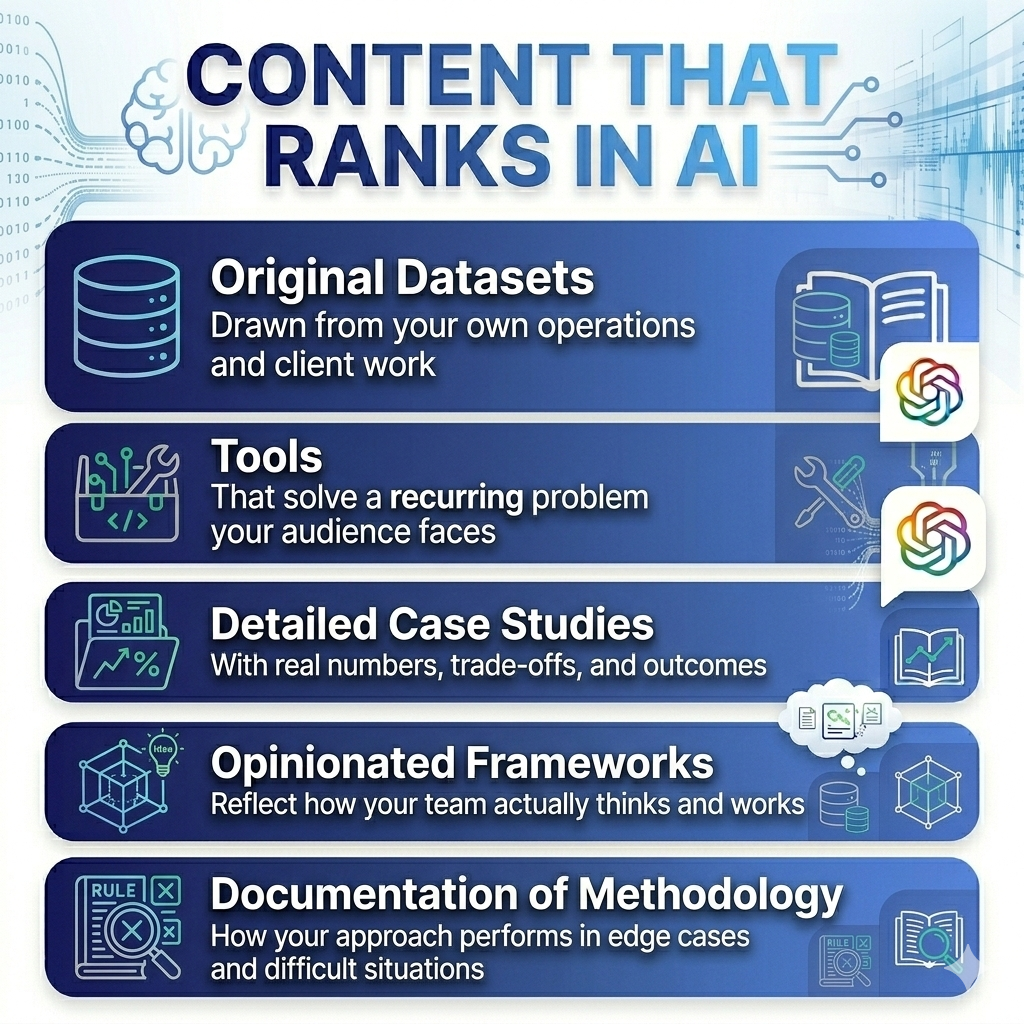

Your Content Requires Genuine Expertise and Experiences

The uncomfortable question every business must now answer is: what can we create that our competitors cannot easily replicate?

Not longer content. Not better-optimized content. Something structurally different: content that requires genuine expertise, real experience, or proprietary access to produce.

Jono Alderson identifies the types that survive AI compression:

- Original datasets drawn from your own operations and client work

- Tools that solve a recurring problem your audience faces

- Detailed case studies with real numbers, trade-offs, and outcomes

- Opinionated frameworks that reflect how your team actually thinks and works

- Documentation of how your methodology performs in edge cases and difficult situations

These assets are expensive to produce and expose how you operate. That is the point. Distinctive, experience-backed content compounds over time. Generic landing pages decay. In an AI-summarized world, indistinguishable content gets abstracted away.

Fanout Keywords: The New Targeting Unit

The era of the two-word keyword is over. Users, especially users of AI tools, don’t search anymore. They ask. They explain. They qualify. They add constraints.

A query that once read ‘CRM software’ now reads ‘best CRM for B2B SaaS startup under 50 employees that integrates with HubSpot and has strong reporting.’

These multi-variable, intent-rich prompts are called Fanout Keywords.

They represent how real buyers describe their actual situation. Your content strategy needs to address the specific qualifiers your audience uses: industry, company size, budget sensitivity, integration requirements, use case specifics, and experience level. Generic content that speaks to everyone reaches no one in an AI-mediated world.

| THE ENTITY AUTHORITY PRINCIPLE In an AI-mediated world, inference is made at the level of entities, not pages. Being the best and being understood as the best are different problems. If the wider web — credible experts, industry publications, review platforms, third-party directories — does not reflect your expertise, the system has no reason to believe you. Your on-site content is only one input into a much larger model. |

How AI Remembers Your Business

Jono Alderson introduced one of the most important frameworks for understanding how AI ecosystems evaluate businesses:

The Machine Immune System.It reframes technical SEO not as a checklist of ranking factors, but as a survival strategy in a world where machines remember everything.

Every interaction between a machine system and your business leaves a trace. Web crawlers log response times and error codes.

Payment processors record declined transactions and timeouts. AI systems note data inconsistencies between your website, your Google Business Profile, and third-party directories. Each individual trace is a signal. At scale, those signals compress into durable summaries.

A pattern of slow page loads becomes ‘site is unreliable.’ Inconsistent business hours across platforms becomes ‘data cannot be trusted.’ A schema markup error becomes ‘information quality is low.’

These summaries don’t just stay with one system. They spread, shared across the network of crawlers, recommenders, and AI models that collectively decide who gets surfaced and who gets skipped.

The critical asymmetry: recovery from a bad machine reputation is far harder than avoiding one. Once a negative signal has been compressed into a summary and circulated, fixing the underlying issue doesn’t immediately clear the record.

The machine immune system is efficient at remembering damage and slow to recognize repair.

What a Healthy Technical Foundation Looks Like in 2026

Alderson’s ‘Death of a Website’ diagnosis applies to an alarming number of business sites: beautiful from the outside, broken underneath. Heavy JavaScript frameworks (React, Vue) that look modern but deliver bloated, slow experiences. Sites where Core Web Vitals metrics are green in dashboards while real users experience latency. Pages where the actual content is a rumor buried under layers of build scaffolding.

The technical priorities that determine machine-readable health in 2026:

- Semantic HTML structure: Clear heading hierarchies (H1, H2, H3) that communicate page architecture to both AI crawlers and users

- Page speed and Core Web Vitals: Fast loading is not just a user experience metric — slow pages get skipped by AI content extraction systems

- Images at 1200px minimum width for Google Discover eligibility — smaller images appear as thumbnails and earn significantly fewer clicks

- Clean robots.txt configuration that doesn’t accidentally block AI crawlers: GPTBot, ClaudeBot, Claude-SearchBot, and PerplexityBot all use different user agents

- FAQ and HowTo schema markup that structures content for AI snippet extraction

- Consistent NAP (Name, Address, Phone) data across every platform — inconsistencies create entity authority gaps

- No ‘nopagereadaloud’ or ‘notranslate’ meta tags, which prevent pages from entering Google Discover entirely

The marketing funnel is not dead but it is a dangerously incomplete map of how people actually make decisions. Rand Fishkin of SparkToro has documented what has replaced it: the Pinball Customer Journey.

How Buyers Actually Move Through the Decision Process

| THE SIMPLICITY PRINCIPLE Simplicity is the new technical SEO. A clean, fast, semantically structured site that a human can read in the source code will outperform a complex, ‘modern’ site built for developer elegance every time. The web’s most performant sites are increasingly its simplest ones. |

A person considering a purchase no longer moves cleanly from awareness to consideration to decision. They bounce.

They start with a newsletter, jump to Google, ask ChatGPT, check Reddit, DM a friend, browse LinkedIn, watch a YouTube review, read a Substack post, and then maybe land on your website.

Sometimes in that order. Often not. Sometimes the last thing they do before converting is check your Google reviews from their phone in the parking lot.

None of that is captured by traditional attribution models. Your analytics will tell you the last click. It will miss the podcast that first introduced your brand, the Reddit thread that built trust, the Substack that planted the idea.

Marketers who optimize only for measurable attribution are systematically over-investing in paid search and under-investing in the channels that actually shape buying decisions.

The Zero-Click Reality

Every major platform has converged on the same economic model: keep users inside. TikTok, Instagram, YouTube, Reddit, LinkedIn, Facebook, and now Google and ChatGPT all reduce the visibility of outbound links or eliminate them entirely.

Traffic sent outward from these platforms is declining even as usage of the platforms themselves grows.

Rand Fishkin’s prediction for 2026: Internet users will consume ten times as much content via AI summaries as they will by visiting actual pages. The primary audience for your written content is increasingly a machine, not a human.

This is not an argument to stop creating content. It is an argument to create content that machines can accurately summarize and attribute. The goal is not to get clicks, it is to build a reputation so well-documented across the web that when AI systems form opinions about your industry, your brand is part of the answer.

Are Website Clicks Down for Everyone? Yes. Here’s Why:

The most important implication of the Pinball Journey and Zero-Click reality is that brand investment is no longer optional.

In the old funnel world, you could serve demand without building a brand. In today’s pinball world, brand recognition drives the click-through rates that reward you across every channel — Google, ChatGPT, Reddit, and everywhere else.

Seven marketing failures that are more common and more damaging than ever:

- Targeting the wrong audience and drawing wrong conclusions from accurate data

- Siloed departments (SEO, PPC, social, PR) sending inconsistent messages across the pinball path

- Investing only in demand capture with no brand investment — the ‘all demand, no brand’ failure

- Ignoring key channels where your audience actually spends time (Reddit, Substack, podcasts, YouTube)

- Attribution addiction — over-weighting the measurable last click and ignoring unmeasurable influence

- In-house obsession — refusing to acknowledge channels where internal teams lack genuine competency

- Sabotage by survey — asking only existing customers how they found you and drawing universal conclusions from a biased sample

Amanda Natividad of SparkToro describes the content formula that works in 2026 as ‘vibes meet facts’ — emotional resonance carried by verifiable evidence. This is not a new insight about human psychology. What is new is the precise way it intersects with AI content evaluation.

Why AI Writing is Destroying Engagement

The proliferation of AI-generated content has created a new category of reader skepticism. Audiences have become acutely sensitive to the patterns of LLM-generated writing: the hedge-everything tone, the ‘it’s important to note’ filler phrases, the bullet-point-everything structure, the lack of any specific named entity or concrete example.

When readers detect these patterns trust collapses.

Highly cited, high-engagement content in 2026 shares a specific voice: the analytical human.

Clear, confident, specific.

It makes definitive claims and supports them with verifiable data. It names real companies, real tools, real people. It uses the language of a senior professional briefing a peer, not a content factory producing volume.

The Secret Content Formula for Winning Needs ‘Vibes’

Facts alone don’t change minds. People selectively accept information that aligns with what they already believe, and correcting misinformation can backfire when it threatens someone’s identity.

This is Kahneman’s fast-and-slow thinking in action: we form impressions quickly and emotionally, then rationalize them with evidence if it’s available.

The formula that works: emotional story first, verifiable data to support it. The vibes earn you attention. The facts earn you credibility. Neither alone is sufficient.

Amanda Natividad’s framework for choosing the right kind of facts:

- For market behavior claims (industry trends, platform usage, adoption rates): prioritize recency over relevance — the most recent data available

- For human behavior claims (psychology, decision-making, persuasion): prioritize relevance over recency — well-established research holds

- Macro-framing facts establish why the market should care about what you’re saying

- Micro-framing facts establish why this specific user should care

- Hard proof facts (case studies, client data, measurable outcomes) establish that what you offer actually works

- Counterpoint facts acknowledge legitimate objections and build credibility by engaging them honestly

| THE AI WRITING TEST Read your content back and ask: could an AI have written this without access to real expertise? If the answer is yes, the content is not differentiated enough to be cited. Every paragraph should contain at least one element that requires genuine knowledge to produce: a specific data point, a named example, a non-obvious implication, or a direct practitioner observation. |

How to Get Your Law Firm Cited in AI Answers

Generative Engine Optimization (GEO) is the emerging discipline of structuring content, building authority, and earning citations in a way that AI systems recognize and trust. It does not replace traditional SEO — it extends it, built on the same organic foundation.

Step 1: Assess

Before optimizing, understand your current position. Are you being cited by AI systems at all? Across which platforms — ChatGPT, Perplexity, Google AI Mode, Gemini? For which types of queries?

Is your brand in the ‘consideration set’ when your target audience asks AI for recommendations in your category?

Critical: check whether any technical or content issues are already suppressing your organic rankings, because organic ranking damage translates directly to AI citation damage with a lag of weeks to months.

Step 2: Optimize

Structural optimization for AI retrieval requires:

- Passage-based content structure — clear, self-contained sections that AI can extract without needing surrounding context

- Heading hierarchies that function as questions — ‘How does X work?’ not ‘Our Approach to X’

- Front-loaded key insights — your most important claim in the first paragraph, not the conclusion

- FAQ sections at the end of key pages with schema markup

- Entity density — named tools, companies, people, and data points that signal domain expertise

- Third-party authority signals — being featured, cited, or referenced on authoritative external sites in your industry

Step 3: Measure

Stop tracking ‘rank #1 in ChatGPT.’ Track Visibility % instead — across 100 or more varied prompt runs representing the full semantic neighborhood of your target queries. This accounts for the inherent probabilistic nature of AI responses and gives you a meaningful signal rather than noise.

Track separately for each AI platform: ChatGPT, Perplexity, Google AI Mode, and Gemini behave differently and draw from different sources. A Perplexity-specific strategy requires attention to non-Google citation signals that a ChatGPT strategy might not.

Step 4: Iterate

AI models update continuously. The content that earns citations today may lose relevance after the next training cycle. Maintain freshness by updating key assets regularly, documenting new data and client outcomes, and monitoring shifts in how your target audience phrases their queries — because prompt language evolves as users become more sophisticated AI users.

The Pay-to-Play Warning

A concerning dynamic has emerged in the GEO space: many of the third-party directory and review sites that carry the heaviest AI citation weight have begun charging brands for featured placement.

Publishers who were penalized for sponsored content in 2024 have returned to the same tactics under different labels, and these pages carry enormous weight in AI Overviews, AI Mode, and ChatGPT responses.

This creates an ethical and strategic tension.

Paying for directory placement on sites that Google considers spam-adjacent is a genuine risk. Before investing in any third-party citation strategy, evaluate whether the platform publishing your inclusion would survive a Google manual review of its business model.

Your 2026 AI Search Audit: Stop / Start

Based on the research synthesized in this guide, here is a practical audit framework for businesses evaluating their current position.

The left column identifies tactics that are actively harming performance. The right column identifies the actions with the highest return in the current environment.

STOP DOING THIS Writing self-promotional ‘Best of’ listicles ranking yourself #1Hiding your service area address (triggers phantom pin bug) Tracking ‘Rank #1 in ChatGPT’. It’s a statistical lotteryScaling AI-generated content without real expertise behind it Over-engineering your site with heavy JavaScript frameworksIgnoring what happens to your rankings when you’re closed Letting siloed SEO, PPC, and social teams send mixed messages | START DOING THIS Front-load your key insights in the first 30% of every page Optimize for your full semantic neighborhood, not one keyword Earn citations on trusted third-party industry authority sites Track Visibility % across 100+ AI prompt variations Produce original data, tools, and genuinely expert content Maintain consistent NAP data across every platform Align every channel around one clear, differentiated message |

The Future Belongs to Brands Worth Remembering

Every framework in this guide points to the same underlying truth: the gap between doing marketing and doing it with scientific rigor has never been more consequential.

The systems evaluating your brand such as search engines, AI models, review platforms, recommendation engines are designed to identify entities that are useful, true, and integral to their understanding of a domain.

They are increasingly effective at filtering out everything else.

Jono Alderson captures the new competitive reality precisely: ‘Most campaigns are built to capture a moment. Few are built to survive a model update.’

The brands that will dominate in 2026 and beyond are not the ones chasing the latest algorithm trick. They are the ones building genuine expertise, documenting it consistently, earning recognition from credible external sources, and maintaining the technical integrity that machines require to trust them.

Marketing has always been about the science behind the success such as understanding cause and effect clearly enough to engineer outcomes, not just hope for them.

What has changed is that the audience judging you is increasingly a machine. And machines are harder to fool, longer to remember, and less forgiving of inconsistency than any human audience has ever been.

The question is not whether your industry will be affected by AI search. Every industry already is. The question is whether your brand will be part of the answer AI gives or absent from it entirely.

Sources & Methodology

This guide synthesizes research and analysis from the following industry experts and publications, published between Q3 2025 and February 2026:

- Lily Ray — AI citation correlation research; algorithm update analysis (Substack)

- Rand Fishkin — AI recommendation consistency research; Pinball Journey framework; 2026 marketing predictions (SparkToro)

- Amanda Natividad — Prompt diversity research with Gumshoe; Vibes Meet Facts content framework (SparkToro)

- Jono Alderson — Machine Immune System framework; Surfaceless Web analysis; keyword entity model (jonoalderson.com)

- Kevin Indig — LLM citation pattern analysis; 1.2M AI answer study

- Miriam Ellis — Local ranking factor research; review and citation signals (Whitespark)

- David McSweeney — ChatGPT architecture analysis

- Jenny Williamson — Fanout keyword and AI prompt intelligence research

- Metehan Yesilyurt — Google Discover SDK architecture research (Search Engine Land)

- Search Engine Land — Google Discover pipeline research; algorithm update coverage

Research compilation methodology: Over a gigabyte of 2026 AI and search reports were processed through NotebookLM for pattern synthesis. Raw articles and synthesized resources were then analyzed through Claude (Anthropic) for insight distillation. All statistics cited in this guide are sourced directly from the original research listed above.